Our Verdict

70%Surprisingly competitive gaming performance, even in ray tracing tests, and there's loads of memory too, but Intel really needs to work on its drivers.

It’s quite remarkable to hold the Acer Predator BiFrost Intel Arc A770 card in your hand. Five years ago, no one would have thought that Acer would not only enter the graphics card market, but that its first cards would also be based on an Intel GPU. Not only that, but this graphics card is surprisingly competitive against Nvidia and AMD’s chips.

That’s a remarkable achievement for Intel as, let’s face it, the company’s previous graphical efforts have been woeful. Aside from the general poor gaming performance of its integrated graphics systems, as well as the underwhelming Xe cards, Intel also wasted a colossal load of resources trying to make an x86-based GPU with the failed Larrabee project. Going further back, the Intel i740 from the 1990s was plagued with compatibility problems too.

But Intel has done it. Hiring some of the world’s top GPU experts and going all out on a brand new GPU design has worked. It even does ray tracing, and has its own resolution scaling tech. It’s not perfect (more on that later), but it really shows the potential for another competitor in the GPU market that can take on AMD and Nvidia.

At Custom PC, we’ve been reviewing the latest gaming GPUs since 2003, and we run a number of grueling GPU benchmarks in order to gauge performance. Our game tests include measuring the frame rate in Cyberpunk 2077, Doom Eternal, and Metro Exodus, all with and without ray tracing, and we also test with Assassin’s Creed Valhalla. For more information, see our How we test page.

The Intel Arc A770 GPU

Let’s start by taking a look at the Intel Arc A770 GPU, which originally came out on Intel’s own branded cards in October 2022. Intel has since discontinued the Arc A770 Limited Edition 16GB cards from its own lineup, but Acer is carrying on making 16GB Arc A770 cards such as this one. This puts it in a unique position, where its price puts it up against 8GB cards from Nvidia and AMD, such as the GeForce RTX 4060 Ti and Radeon RX 7600.

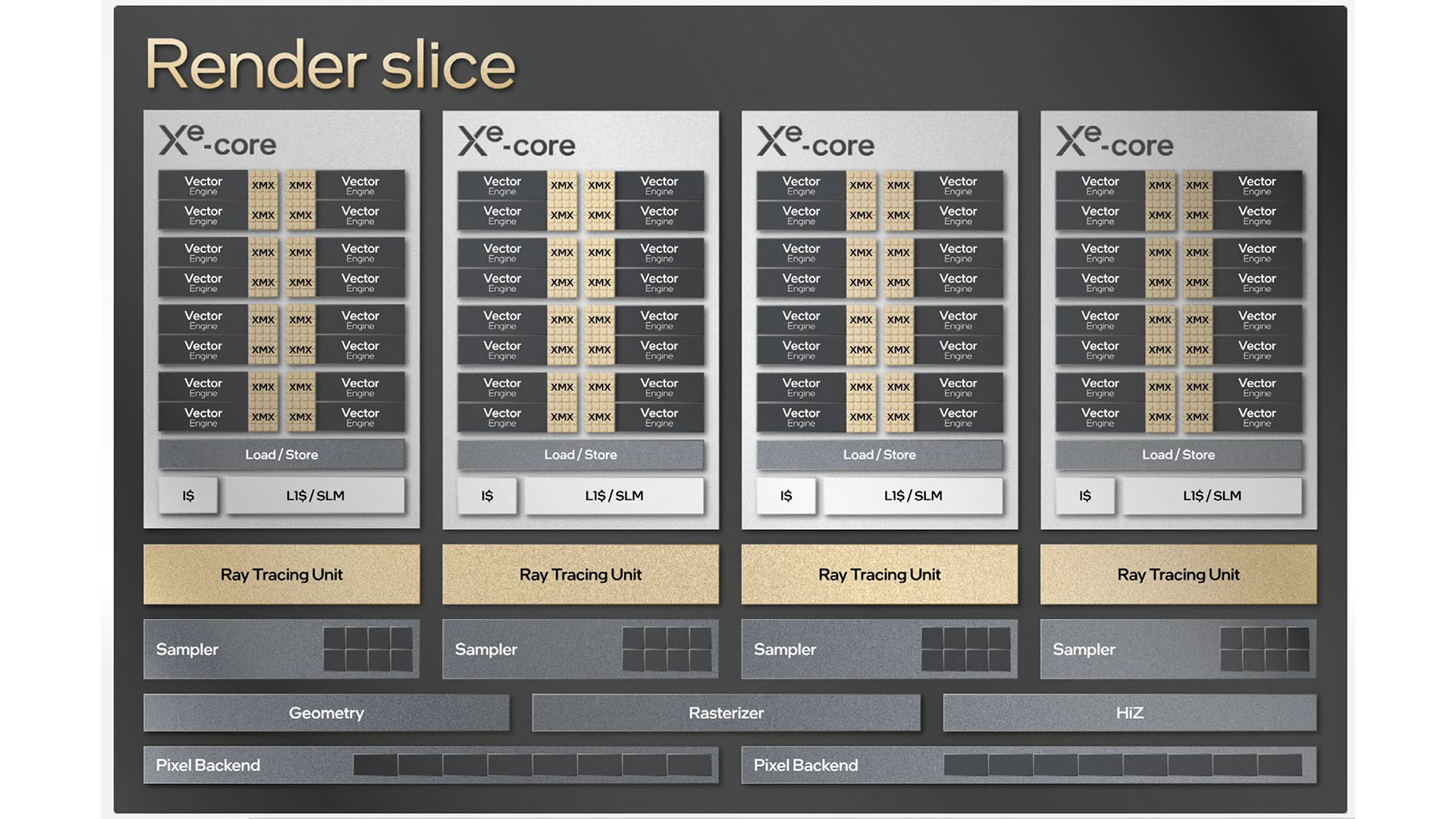

Like Nvidia and AMD’s GPUs, the Arc A770 is divided into blocks that then contain the key processing units. Intel’s terminology gives us the Xe-core block, each of which contains 16 of Intel’s Xe Vector Engines (XVE). Each XVE contains a register file, as well as an eight-unit floating point block, an eight-unit integer block, and a two-unit extended math block.

It’s the floating point units that you can think of as being equivalent to the CUDA cores and stream processors in Nvidia and AMD’s GPUs when it comes to shader power. However, just as Nvidia and AMD’s stream processors are very far from being equivalent to each other, the same goes here – you can’t assume that one number of Intel FPs is better or worse than the same number of Nvidia or AMD equivalents.

With eight FP units in each XVE, each Xe-core block basically gives you 128 stream processors. In addition, there are also 16 of Intel’s XMX units in each Xe-core, which are Intel’s equivalent of Nvidia’s Tensor cores – matrix multiply units that are geared towards processing AI algorithms. Like Nvidia, Intel has also developed its own super-sampling resolution scaling tech to take advantage of its matrix cores.

Unlike Nvidia and AMD, however, Intel hasn’t included the ray tracing processor inside the Xe-core. Instead, a separate ray tracing core is connected to each Xe-core, coming after the thread sorting unit. Each RT core has two traversal pipeline and box intersection stages, and then a single triangle/quad intersection stage.

Intel has also given each RT core a bounding volume hierarchy (BVH) cache unit, and full hardware for processing BVH traversal – a process that AMD RDNA 2 GPUs, such as the Radeon RX 6700 XT, performed using the general GPU shaders, rather than ray accelerator, which negatively impacted performance.

The bigger building block is then the Render Slice, which contains four Xe-cores, each of which communicates with a thread sorting unit and ray tracing unit, and then goes through to the final phases, such as geometry and rasterization, and pixel backend processes.

There are eight Render Slices in the Arc A770, effectively giving it 4,096 stream processors, 512 XMX cores, and 32 RT cores. It’s all packed into a 406mm² die, which is produced on a 6nm process and contains 21,700 million transistors.

The Acer BiFrost Intel Arc A770 OC card

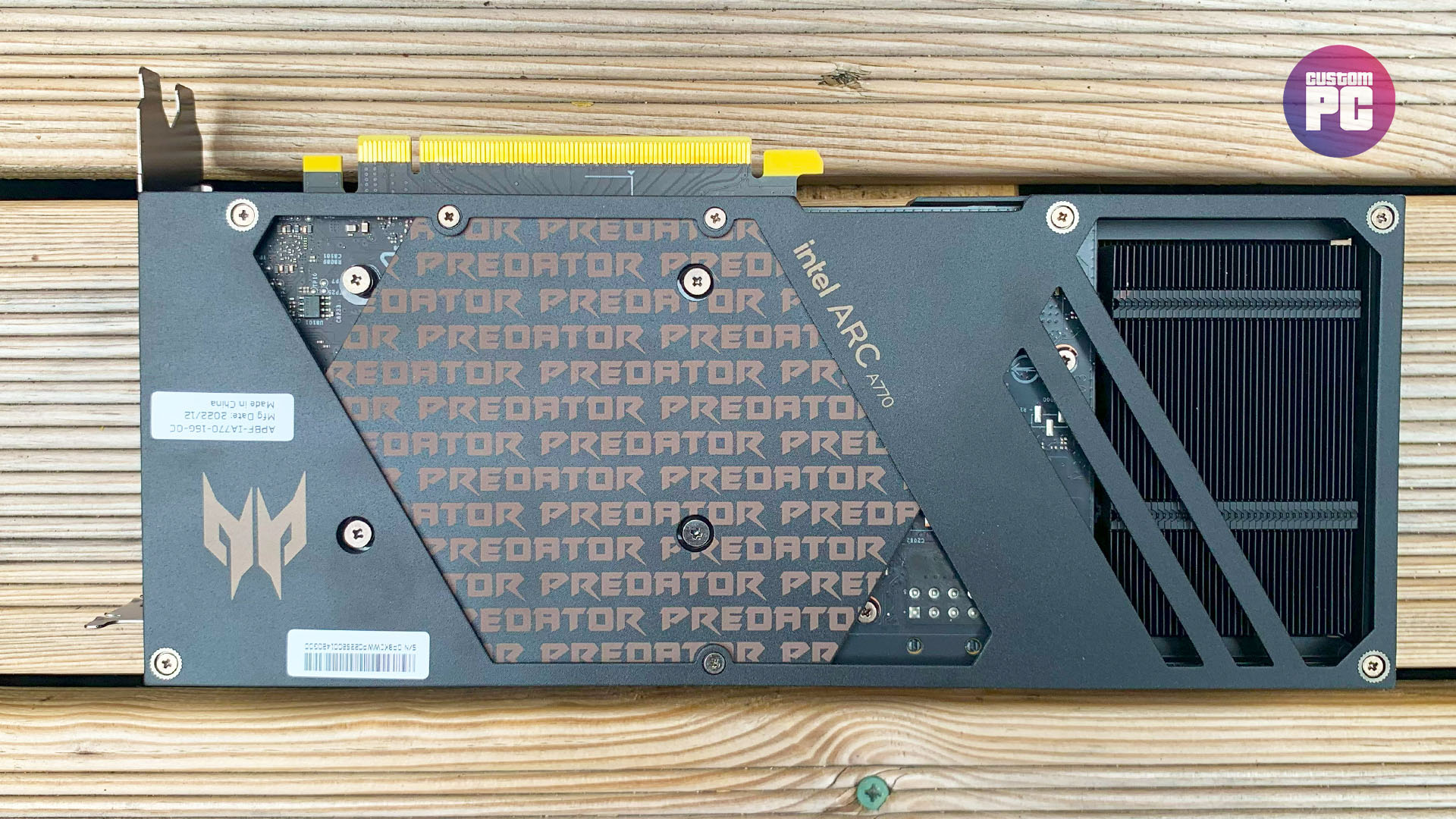

Acer has taken the boxy design of the standard Intel Arc A770 card and given it a full ‘gamer’ makeover based on its Predator brand. You get two fans – a 92mm RGB spinner on the end, and an Acer Aeroblade fan in the middle.

The latter has a clever design – it looks like the blower fans used on old Nvidia and AMD reference cards, but its use of metal blades improves airflow, and it has lighting behind it too. Thankfully, it doesn’t ever spin up to the point where it makes a horrendous noise like those old blower coolers either.

That said, the GPU did get hot when running at full load, hitting 82°C in our tests, and the backplate became hot to the touch as well. The cooler is clearly doing its job, but we’d be interested to see whether it would be better or worse with a standard two or three-fan setup, rather than the Aeroblade and big fan on the end.

The cooler gives the GPU room to boost as well – the Arc A770 has a standard quoted gaming clock speed of 2100MHz at the default settings, but the Acer was regularly boosting to a peak of 2400MHz in our tests. You can also enable two other clock speed modes in Acer’s BiFrost software, a Silent Mode that drops the boost clock to 2250MHz, and an OC mode that increases the base clock from 2100MHz to 2200MHz, but (rather pointlessly) keeps the stock boost clock of 2400MHz. We saw no performance difference beyond the margin of error between the two modes in our testing.

Meanwhile, the RGB lighting looks a bit garish when you first fire up the card – it’s not a subtle look. The rainbow lighting spills out of the large 92mm fan on the end, and you also get RGB lighting under the Predator logo on the top edge of the card. Thankfully, you can tweak the lighting to your own style with Acer’s Predator BiFrost software, which gives you a choice of lighting effects and colors.

Of course, other people’s tastes vary, and you might love the look of the Acer BiFrost, but it looks a bit over the top for our tastes. The Sapphire Nitro+, Asus ROG Strix, and Zotac Airo cards all show how RGB lighting can be integrated into a much classier-looking graphics card.

Intel Arc A770 compatibility problems

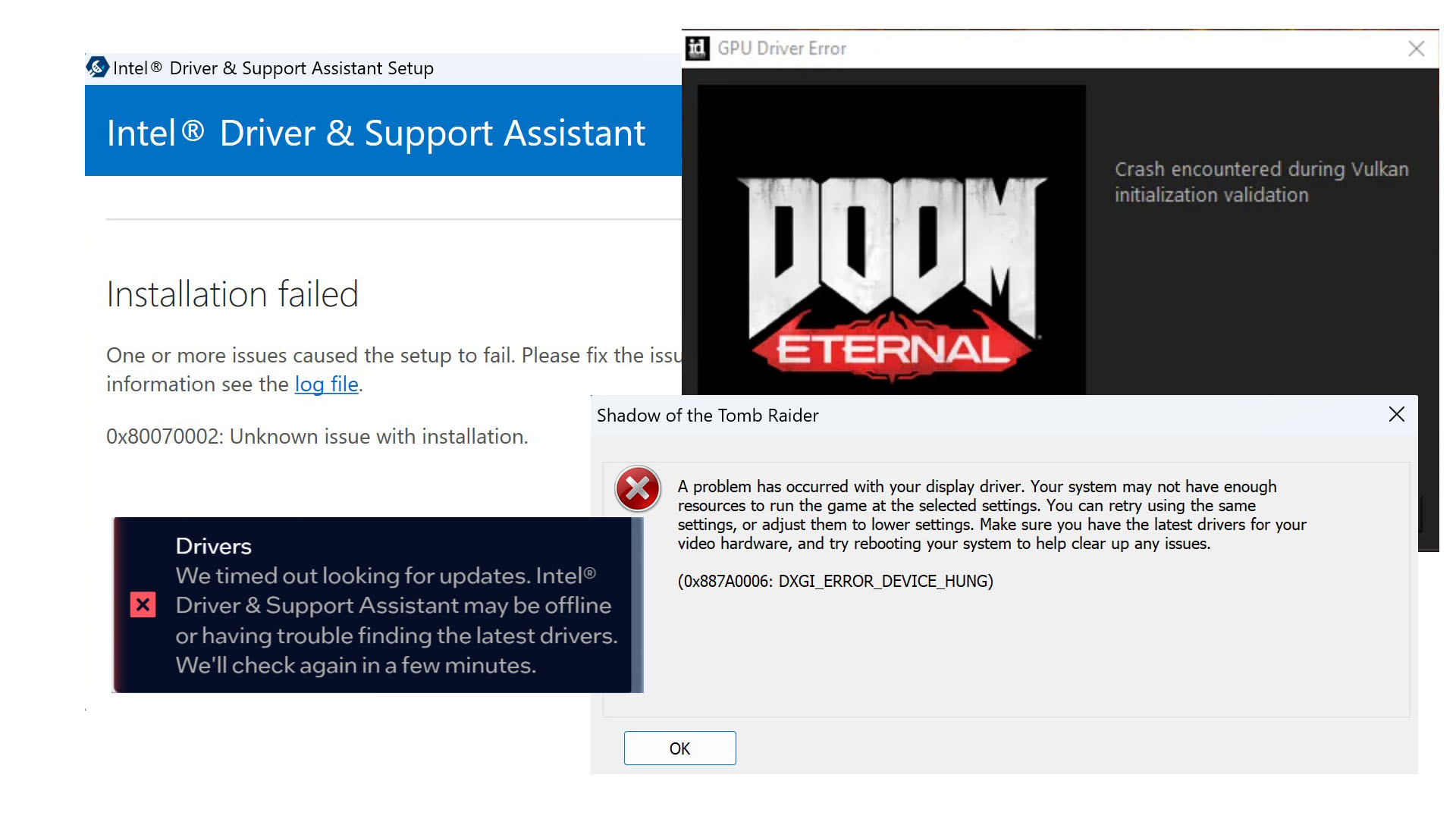

Before we get to performance, we want to address the biggest problem for the Intel Arc A770, even several months after launch, and that’s compatibility problems. Only one of our test games (Cyberpunk 2077) fired up first time on this GPU.

Every other game required a frustrating battle to get it to work on our AMD Ryzen 9 5900X test rig. Vulkan runtime errors required us to uninstall and reinstall the Intel GPU drivers to get Doom Eternal running, for example, and Metro Exodus had to be uninstalled and reinstalled to stop it stalling on startup. Irritatingly, Windows startup would also always greet us with a driver update notification with a red cross, saying that the Intel software had timed out looking for driver updates – this didn’t cause any actual problems, but it’s a needless annoyance.

We also tried to get Shadow of the Tomb Raider running, so we could look at DirectX 11 vs DirectX 12 performance, but it simply wouldn’t run on our test rig in the former mode, despite our best efforts, coming up with either a display driver error, or simply just not loading the game. We also got an ‘Installation failed’ error message after installing the latest Intel GPU driver, even though the driver had actually installed itself fine.

We did, however, run our Total War: Warhammer II benchmark in DirectX 11 mode on the Arc A770, and it was absolutely fine, averaging 118fps at 1080p. There’s been a lot of talk about Intel’s GPUs struggling when they’re not running DirectX 12 games, and Intel has seemingly only prioritized these games at launch, but it looks as though it’s retroactively on the case with driver optimizations. That said, there are a lot of old PC games out there, and if you still run a lot of them on your PC, we’d advise picking up an Nvidia or AMD GPU, rather than the Intel Arc A770.

Intel is working on regular driver updates for the Intel Arc lineup, but there’s clearly some work to be done before having an Arc GPU is a friendly user experience – we rarely experience these sorts of issues on the latest Nvidia and AMD GPUs these days, particularly so long after launch.

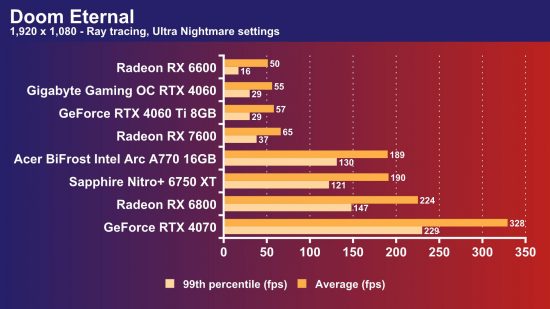

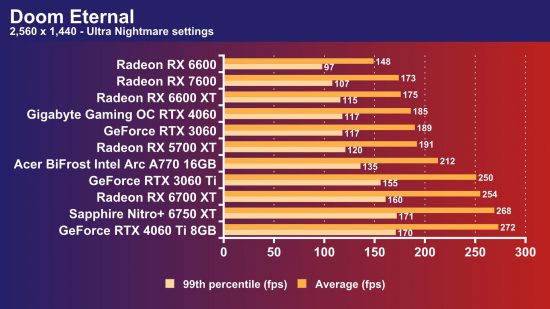

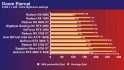

Intel Arc A770 Doom Eternal frame rate

The average Intel Arc A770 Doom Eternal frame rate is 287fps at 1,920 x 1,080, and 212fps at 2,560 x 1,440.

We’re going to kick off the performance discussion with the headline result, which is that the Acer BiFrost Intel Arc A770 is 231 percent faster than the GeForce RTX 4060 Ti 8GB in Doom Eternal when you enable ray tracing. That might seem astonishing, but it’s simply a result of the Acer card coming with 16GB of memory, and the Nvidia GPU being limited to just 8GB.

This test really goes hard on VRAM usage, causing 8GB cards to stall and stutter, while 16GB cards can pull away and rack up huge frame rates. Nvidia has now released the GeForce RTX 4060 Ti 16GB, of course, but for a silly starting price of $499 – a long way above of the price of this Acer card.

Anyway, you can run Doom Eternal at Ultra Nightmare settings with ray tracing enabled on the Acer card, and get an average of 190fps, with a 130fps 99th percentile frame rate. That’s roughly equivalent to the performance of the Radeon RX 6750 XT.

Without ray tracing enabled, the Intel GPU is still ahead of the Radeon RX 7600 in this game, and it holds its own against the GeForce RTX 4060 as well, beating it at 1440p and not being far behind it at 1080p. In short, this GPU is a great all-round option for Doom Eternal, even if you don’t enable ray tracing – a great result.

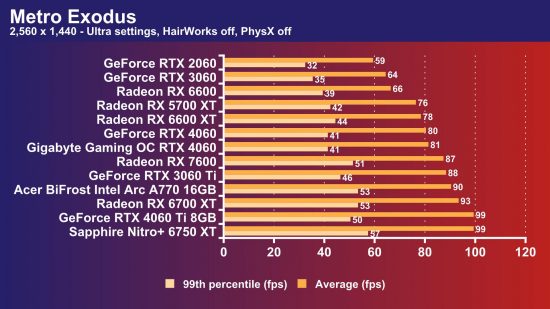

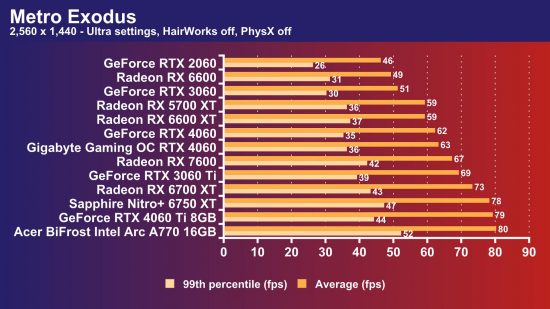

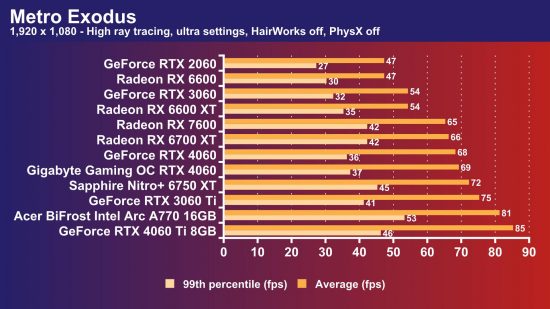

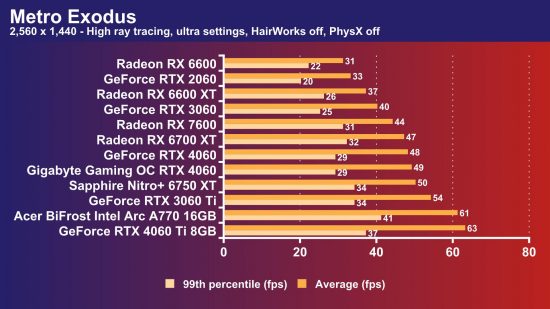

Intel Arc A770 Metro Exodus frame rate

The average Intel Arc A770 Metro Exodus frame rate is 90fps at 1,920 x 1,080, and 80fps at 2,560 x 1,440.

Intel has clearly put a lot of work into optimizing the Arc A770 drivers for Metro Exodus, and it has paid off. It tops the graphs in this game at 1440p, even beating the GeForce RTX 4060 Ti 8GB, with an average of 80fps and a solid 99th percentile result of 52fps.

Impressively, the Arc A770 can handle this game with ray tracing enabled as well, without needing assistance from resolution scaling tech. Run it at the High ray tracing preset and it averages 81fps, with a 53fps 99th percentile result.

This again puts it in the same league as the GeForce RTX 4060 Ti 8GB. It can even maintain a 61fps average in this game at 1440p with ray tracing enabled. Keeping up with Nvidia’s latest GPUs in a ray tracing benchmark is no easy feat, and hats off to Intel for achieving it with this GPU.

Intel Arc A770 Cyberpunk 2077 frame rate

The average Intel Arc A770 Cyberpunk 2077 frame rate is 69fps at 1,920 x 1,080, and 49fps at 2,560 x 1,440.

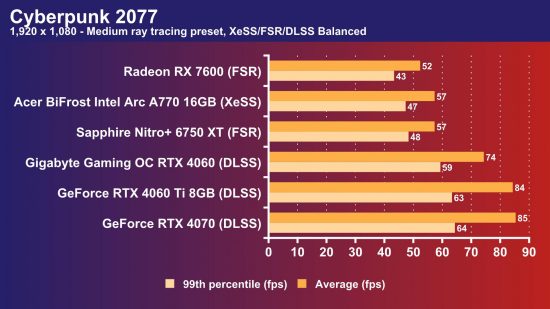

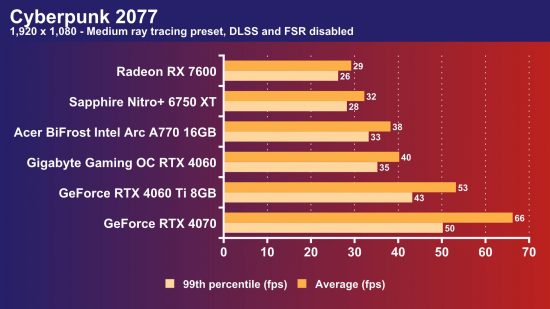

Ray tracing in Cyberpunk 2077 is where the Intel Arc A770 starts to fall behind Nvidia. Run this game at the Medium ray tracing preset at 1080p, and the Arc A770 can only average 38fps, compared with 53fps on the RTX 4060 Ti 8GB. That said, though, the Intel GPU is ahead of the AMD competition here, beating both the Radeon RX 6750 XT and Radeon RX 7600.

This game also gave us a chance to test Intel’s XeSS resolution scaling tech, which works well – the game looks good and you get a performance boost as well. With XeSS on Balanced, and the Medium ray tracing preset enabled, the Arc A770 averaged 57fps with a 47fps 99th percentile result – that’s solidly playable, and faster than the AMD Radeon RX 7600 with FSR on Balanced.

However, Nvidia has got this area sewn up with DLSS. The GeForce RTX 4060 averages 74fps at the same preset with DLSS on Balanced, and you can even run it at the Ultra ray tracing preset if you enable Nvidia’s latest DLSS 3 AI frame-generation tech.

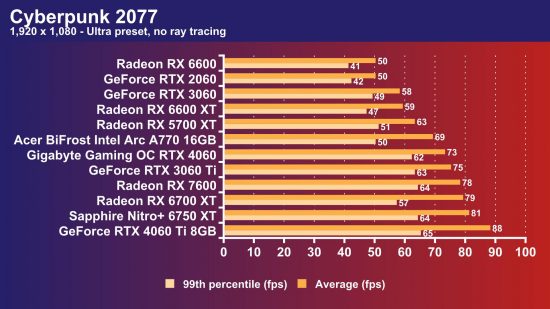

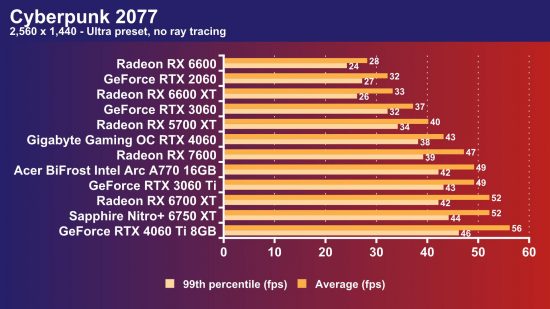

If you’re not bothered about ray tracing, the Intel Arc A770 can handle this game well at 1,920 x 1,080, averaging 69fps with a 50fps 99th percentile. That’s not as quick as the RTX 4060 and Radeon RX 7600, but it’s fast enough to play the game smoothly.

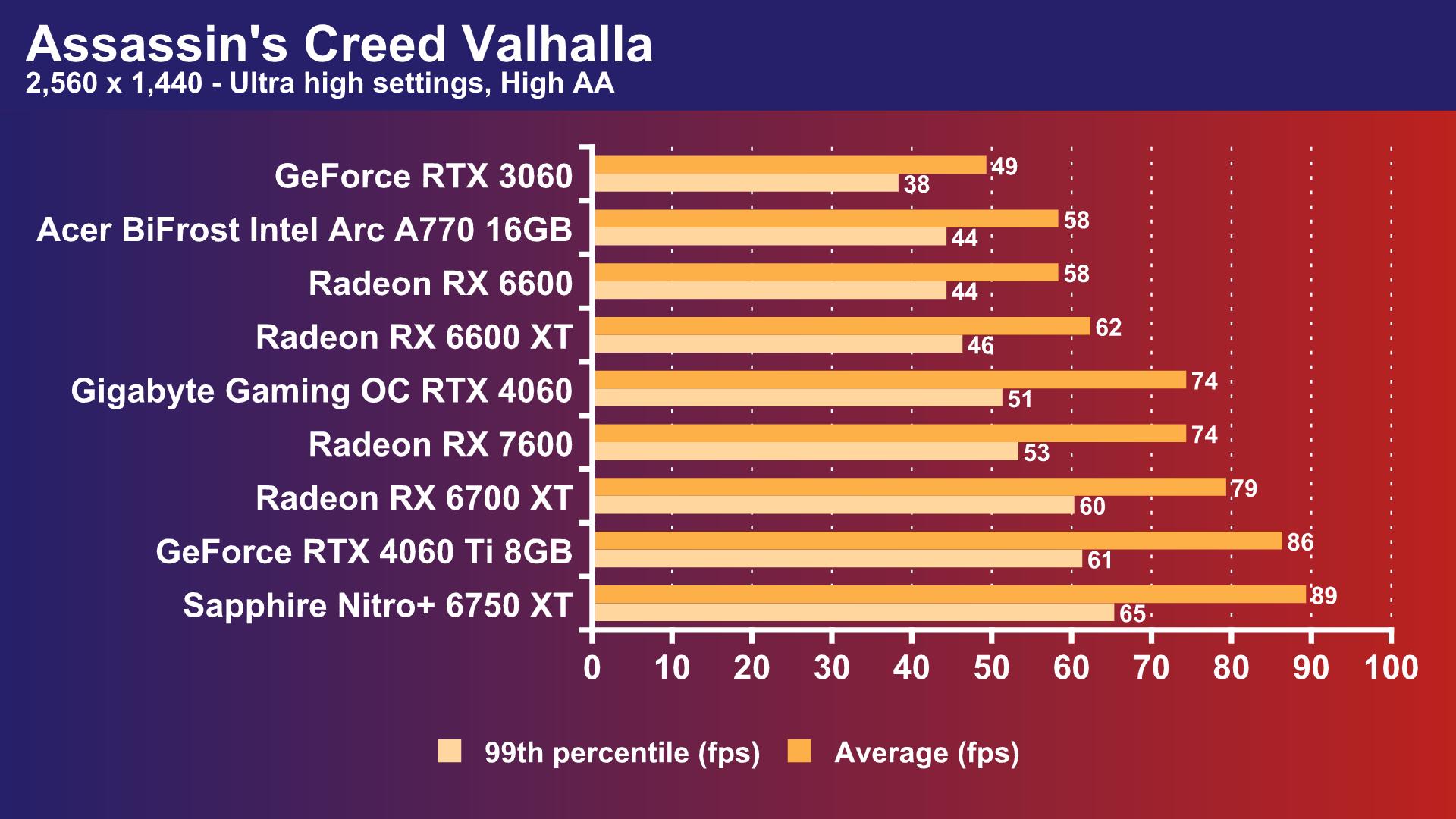

Intel Arc A770 Assassin’s Creed Valhalla frame rate

The average Intel Arc A770 Assassin’s Creed Valhalla frame rate is 71fps at 1,920 x 1,080, and 58fps at 2,560 x 1,440.

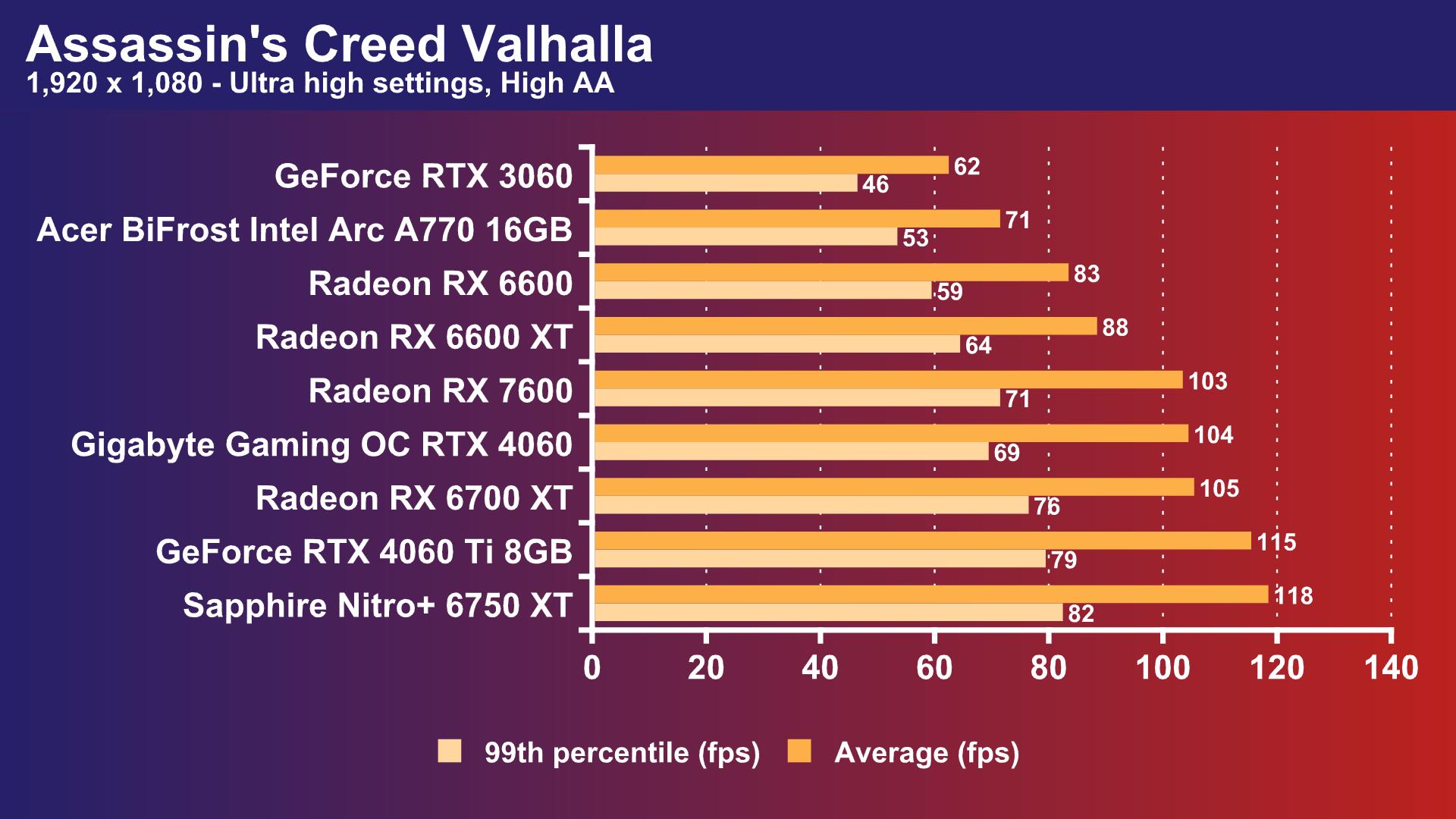

Where the Intel Arc A770 really starts to lag behind the competition is in our Assassin’s Creed Valhalla benchmark. It’s not slow, but it’s disappointingly behind the competition. Run it at 1080p with Ultra high settings, and the Radeon RX 7600 averaged 103fps, compared with just 71fps on the Arc A770.

The Intel GPU is faster than the GeForce RTX 3060 in this test, but it’s beaten by the last-gen AMD Radeon RX 6600. You also start to notice the Intel’s slower frame rate when you’re playing this game at 1440p, while the RTX 4060 and Radeon RX 7600 are noticeably smoother.

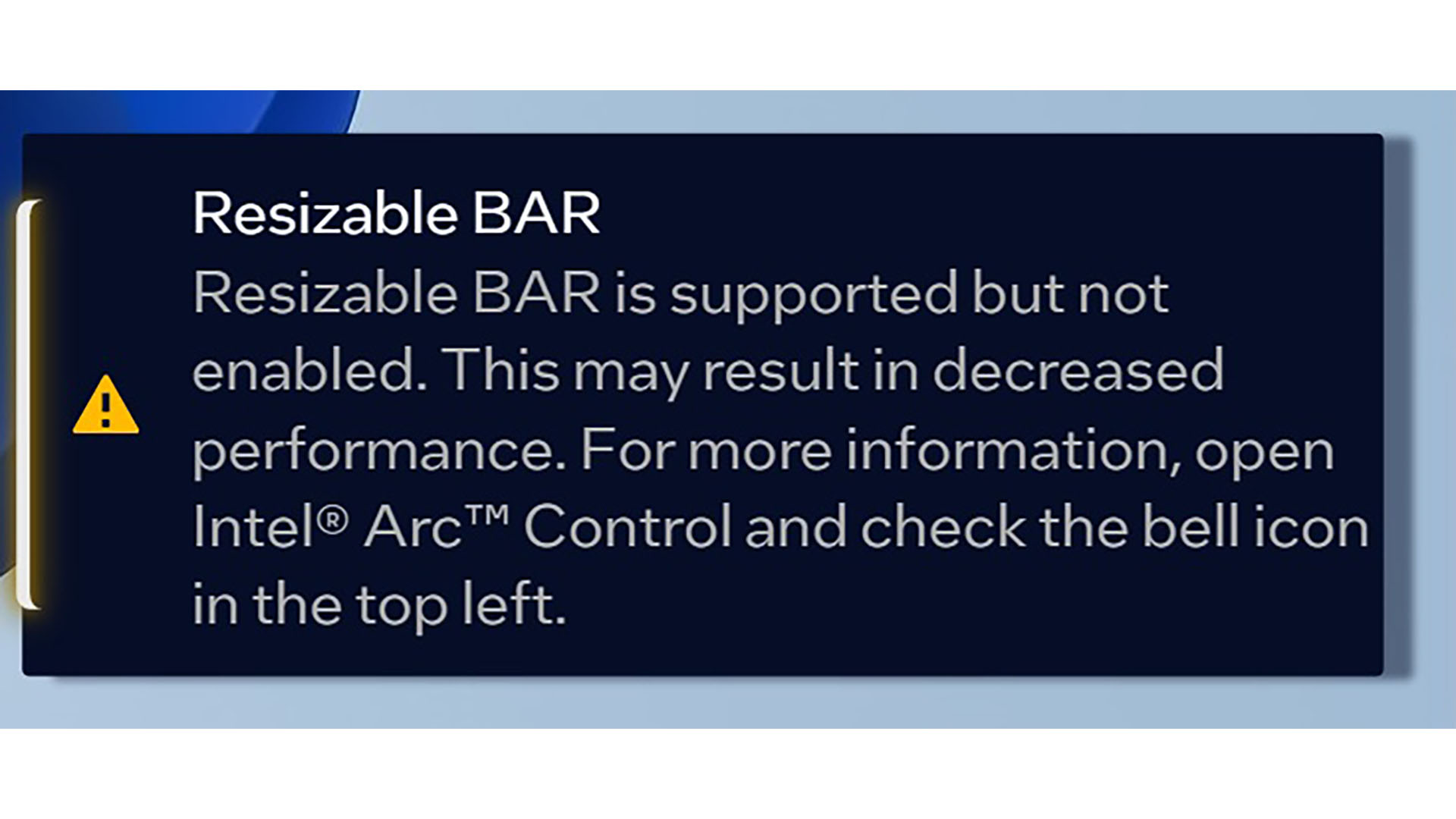

Intel Arc A770 reliance on resizable BAR

There’s another word of warning on top of the compatibility issues mentioned earlier, which is that the Intel Arc A770 is extremely reliant on resizable BAR to perform properly. You will get a pop-up warning from Intel’s driver software if it isn’t enabled, and you only need to look at the performance without it to see why.

We disabled resizable BAR to see what difference it made to performance, and it was huge. In Assassin’s Creed Valhalla at 1080p, the average frame rate dropped from 71fps all the way down to 38fps after disabling resizable BAR – that’s nearly half the frame rate gone. Comparatively, the Radeon RX 6600 XT frame rate only drops from 88fps to 79fps with the same settings.

Most motherboards support resizable BAR now, even including some old Intel Coffee Lake motherboards, but if you’re planning to put a new GPU in an old system, make doubly sure it supports resizable BAR before you buy an Intel Arc GPU.

On the plus side, we’re glad to see that the Intel Arc A770 has a full 16x PCie 4 interface, rather than the 8x interfaces seen on Nvidia and AMD’s latest GPUs in the same price range. You won’t notice the difference between 8x and 16x on these GPUs on a PCIe 4 motherboard, but you do get a tangible performance drop-off if you use an 8x card on an older PCIe 3 motherboard.

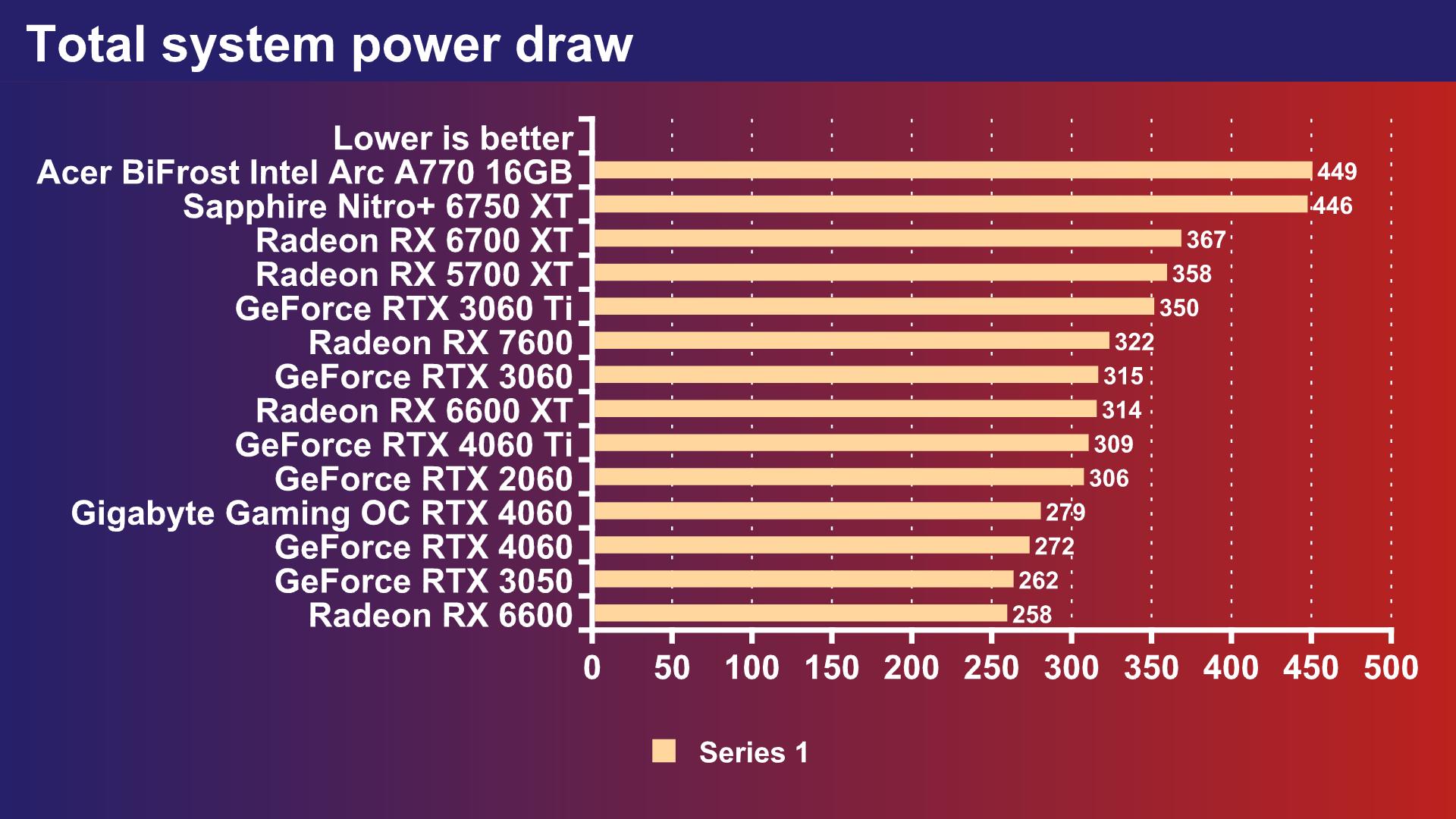

Intel Arc A770 power draw

The final problem is the Intel Arc A770 power draw. Our system drew 449W from the mains with the Acer BiFrost Intel Arc A770 card running at full load, which dwarfs the figures from Nvidia and AMD’s latest GPUs in this price range.

For example, our system only drew 272W with the RTX 4060 installed, and 322W with the Radeon RX 7600. This won’t be a deal breaker for a lot of people, but you’ll want a 650W PSU for this GPU, and that seems a bit silly for the performance on offer. Intel clearly has some work to do on performance per Watt.

Intel Arc A770 pros and cons

Pros

- Loads of memory

- Surprisingly competitive performance

- Ray tracing and XeSS work well

- Great Metro Exodus performance

Cons

- High power draw

- Compatibility problems

- Lags in Assassin’s Creed Valhalla

- Heavily reliant on resizable BAR

Acer BiFrost Intel Arc A770 OC specs

The Acer BiFrost Intel Arc A770 OC specs list is:

| GPU | DG2-512 |

| Stream processors | 4,096 |

| RT cores | 32 |

| XMX cores | 512 |

| ROPs | 128 |

| Base clock | 2100 MHz |

| Boost clock | 2400 MHz |

| Memory | 16GB GDDR6 |

| Memory bus | 256-bit |

| Memory clock | 2187MHz (17.5GHz effective) |

| Memory bandwidth | 560 GB/s |

| L2 cache | 32MB |

| TDP / TGP | 160 W |

| Interface | 16x PCIe 4 |

Acer BiFrost Intel Arc A770 OC price

The usual Acer BiFrost Intel Arc A770 OC price is $399, but it’s often available with a substantial discount to $319 (£299).

Price: Expect to pay $399 (£379).

Acer Intel Arc A770 OC review conclusion

The Acer Predator BiFrost Intel Arc A770 OC is definitely a mixed bag, but the mix is mostly positive. We have to say we’re really impressed by what Intel has achieved with this GPU compared to its previous efforts. It’s genuinely not only competitive, but it even outperforms the similarly priced competition from Nvidia and AMD in some tests.

The inclusion of 16GB of memory is a definite plus point over the GeForce RTX 4060 Ti 8GB, enabling you to turn on ray tracing in games such as Doom Eternal, which struggle with just 8GB of VRAM. Impressively, while Nvidia is still the ray tracing king, Intel has stepped ahead of AMD here, with the Arc A770 out-performing the AMD Radeon RX 7600 in ray-traced games.

There are problems, though. Our testing experience was soured by the number of compatibility issues that needed solving, and Intel really needs to step up its efforts on driver optimization if it wants to compete with Nvidia and AMD.

Also, while Intel’s XeSS tech worked impressively well in Cyberpunk 2077, there aren’t many games that support it compared to DLSS. What’s more, Nvidia now has DLSS 3 in its arsenal, which is a serious advantage in games that support it. The heavy reliance on resizable BAR is also potentially a problem for people upgrading older systems, and the Arc A770 consumes a lot of power for the performance on offer.

As it stands, we’re still going to stick to recommending the Radeon RX 7600 as our preferred budget gaming GPU, as its price is fantastic, while the GeForce RTX 4060 Ti 8GB is the next best step up. The latter only has 8GB of memory, which is a shortcoming, but it makes up for it with its support for DLSS 3 and overall better user experience than the Intel Arc A770.

That said, we’re impressed by the huge stride Intel has made in GPU development with the Arc A770. We honestly didn’t think we’d ever see this sort of performance from an Intel GPU, and the company has clearly demonstrated that it has the hardware clout to properly compete in this game. With some solid work on compatibility, driver optimizations, and power draw, the next Intel Battlemage GPU could properly take on the current GPU duopoly.

If you’re thinking of buying a new GPU, make sure you also read our guide to the best graphics card, where we give you our best recommendations at a range of prices. If you’re speccing up a new PC, make sure you also check out our guide to the best gaming CPU, as well as our tutorial on how to build a gaming PC, where we take you through every step of the process.