Pity the poor PC of 1983-1984, before EGA graphics changed everything. It wasn’t the graphics powerhouse we know today. IBM’s machines, such as the IBM PC 5150, and their clones might have been the talk of the business world, but they were stuck with text-only displays or low-definition bitmap graphics.

The maximum color graphics resolution was 320 x 200, with colors limited to four from a hard-wired palette of 16. Worse, three of those colors were cyan, brown and magenta, and half of them were just lighter variations of the other half.

By this point, IBM’s Color Graphics Adaptor (CGA) standard was looking embarrassing. Even home computers such as the Commodore 64 could display 16-color graphics, and Apple was about to launch the Apple IIc, which could hit 560 x 192 with 16 colors. IBM had introduced the Monochrome Display Adaptor (MDA) standard, but this couldn’t dish out more pixels, only high-resolution mono text.

The first EGA graphics card

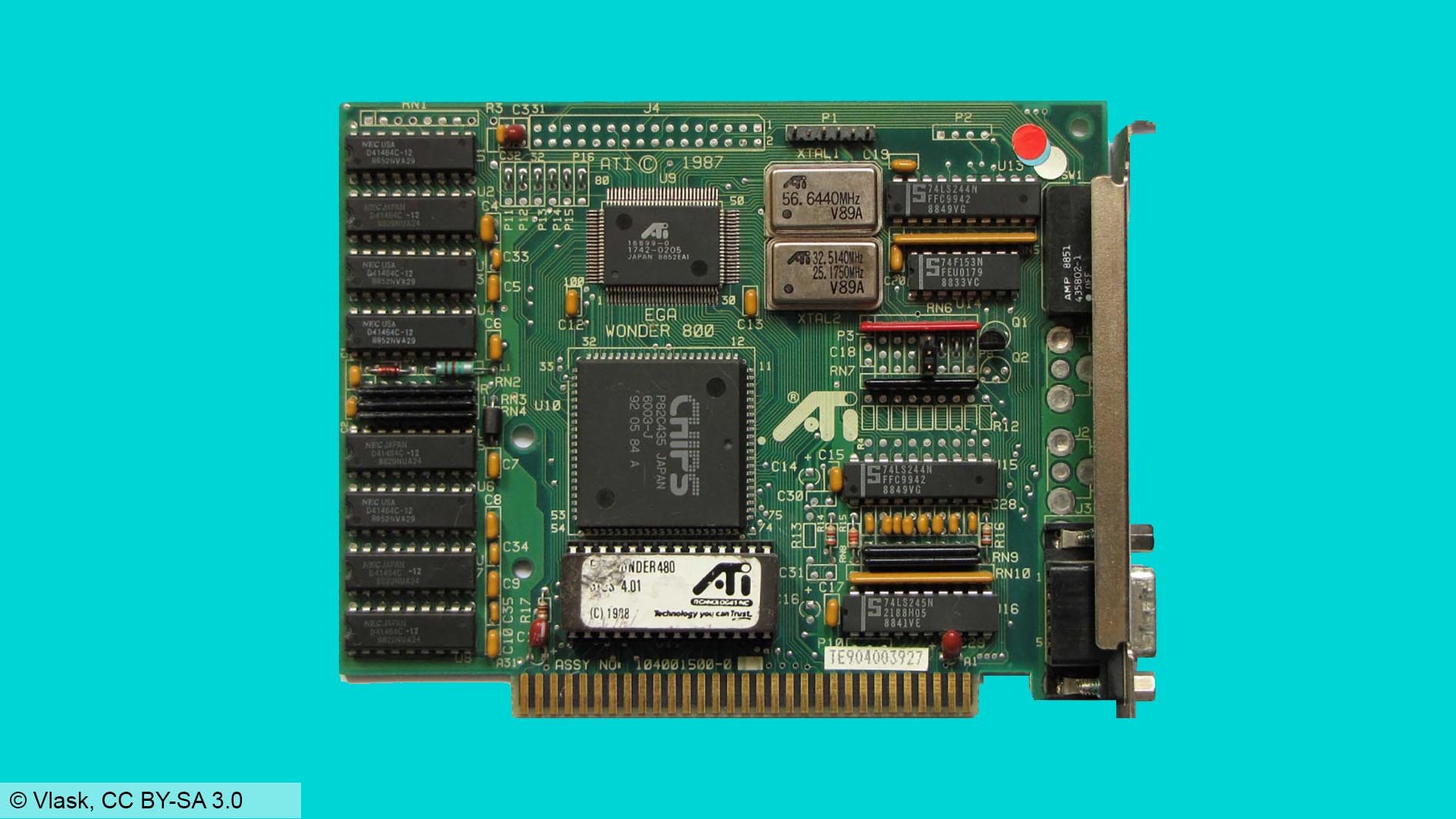

The original Enhanced Graphics Adaptor (EGA) was a hefty optional add-in-card for the IBM PC/AT, using the standard 8-bit ISA bus and with support built into the new model’s motherboard. Previous IBM PCs required a ROM upgrade in order to support it.

It was massive, measuring over 13 inches long and containing dozens of specialist large-scale integration (LSI chips), memory controllers, memory chips and crystal timers to keep it all running in sync. It came with 64KB of RAM on-board but could be upgraded through a Graphics Memory Expansion Card and an additional Memory Module Kit to up to 192KB.

Crucially, these first EGA cards were designed to work with the IBM 5154 Enhanced Color Display Monitor, while still being compatible with existing CGA and MDA displays. IBM managed this by using the same 9-pin D-Sub connector, and by fitting four DIP switches to the back of the card to select your monitor type.

EGA was a significant upgrade from low-res, four-colour CGA. With EGA, you could go up to 640 x 200 or even (gasp) 640 x 350. You could have 16 colors on the screen at once from a palette of 64. Where once even owners of 8-bit home computers would have laughed at the PC’s graphics capabilities, EGA and the 286 processor put the PC/AT back in the game.

16 colors make all the difference – here’s The Secret of Monkey Island in EGA (left) and CGA (right)

The price of EGA

However, EGA had one big problem; it was prohibitively expensive, even in an era when PCs were already astronomically expensive. The basic EGA card price was over $500 (around $1,400 today), and the Memory Expansion Card a further $199.

Go for the full 192KB of RAM and you were looking at a total of nearly $1,000 (approximately $2,900 in today’s money), making a top-end EGA card way more expensive than the GeForce RTX 4090 today. What’s more, the monitor you needed to make the most of it cost a further $850 (approximately $2,500 today). EGA was a rich enthusiast’s toy.

However, while the initial card was big and hideously complex, the basic design and all the tricky I/O stuff were relatively easy to work out. Within a year, a smaller company, Chips and Technologies (C&T) of Milpitas, California, had designed an EGA-compatible graphics chipset.

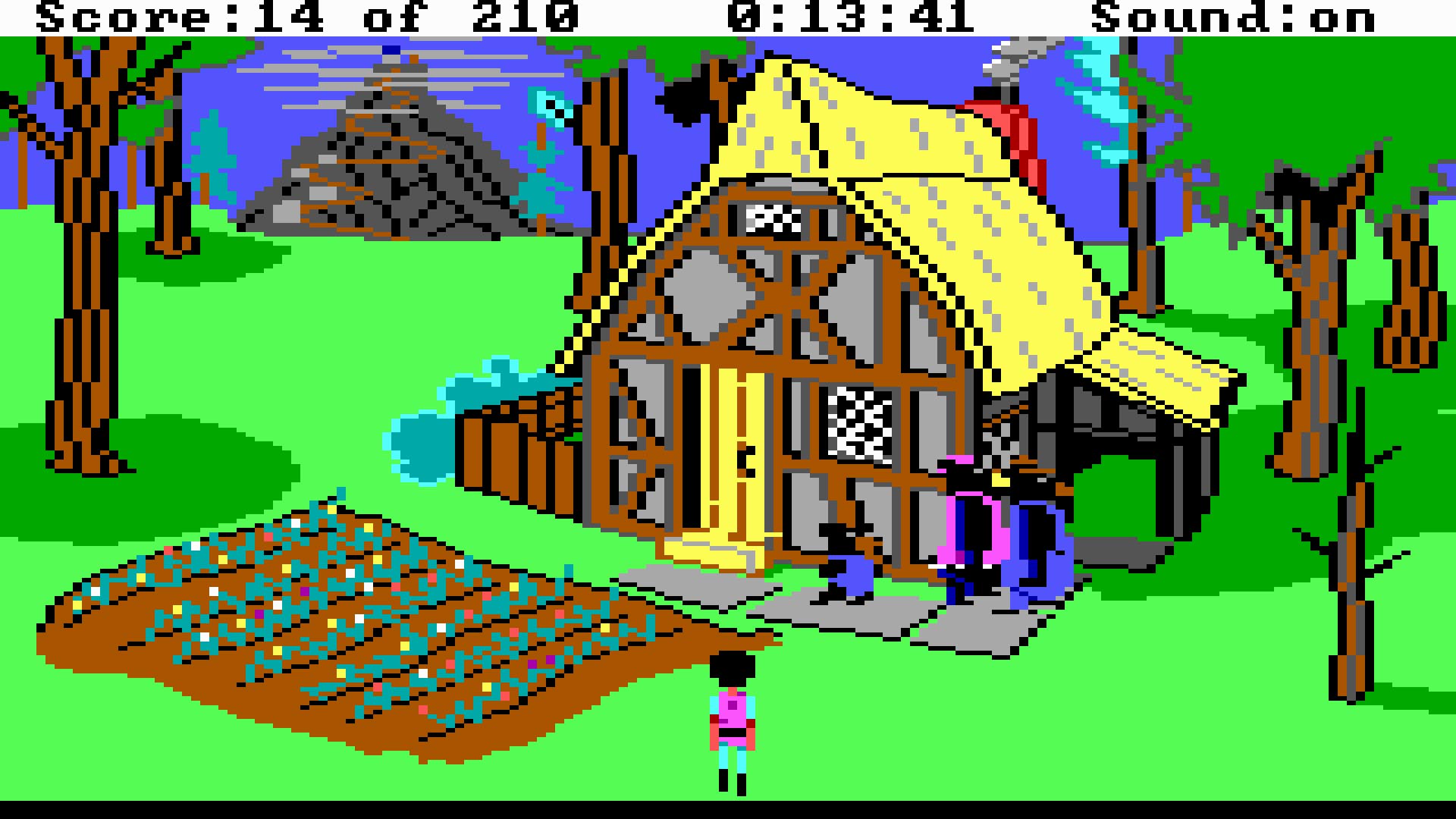

In The Colonel’s Bequest, Roberta Williams and a talented art team used EGA to create more detailed and cinematic graphic adventures

It consolidated and shrunk IBM’s extensive line-up of chips into a smaller number, which could fit on a smaller, cheaper board. The first C&T chipset launched in September 1985, and within a further two months, half a dozen companies had introduced EGA-compatible cards.

Other chip manufacturers developed their own clone chipsets and add-in-cards too, and by 1986, over two dozen manufacturers were selling EGA clone cards, claiming over 40 percent of the early graphics add-in-card market. One, Array Technology Inc, would become better known as ATi, and later swallowed up by AMD. If you’re on the red team in the ongoing GPU war, that story starts here.

Using Chips and Technologies’ EGA chipset, early graphics card manufacturers such as ATi could produce smaller, cheaper boards.

EGA gaming

EGA also had a profound impact on PC gaming. Of course, there were PC games before EGA, but many were text-based or built to work around the severe limitations of CGA. With EGA, there was scope to create striking and even beautiful PC games.

This didn’t happen overnight. The cost of 286 PCs, EGA cards and monitors meant that it was 1987 before EGA support became common, and 1990 before it hit its stride. Yet EGA helped to spur on the rise and development of the PC RPG, including the legendary SSI ‘Gold Box’ series of Advanced Dungeons & Dragons titles, Wizardry VI: Bane of the Cosmic Forge, Might and Magic II, and Ultima II to Ultima V.

With a wider range of colors, EGA helped hasten a golden age of PC RPGs in the late eighties and early nineties.

It also powered a new wave of better-looking graphical adventures, such as Roberta Williams’ Kings Quest II and III (pictured below), plus The Colonel’s Bequest. EGA helped LucasArts to bring us pioneering point-and-click classics such as Maniac Mansion and Loom in 16 colors. And while most games stuck to a 320 x 200 resolution, some, such as SimCity, would make the most of the higher 640 x 350 option.

What’s more, EGA made real action games on the PC a realistic proposition. The likes of the Commander Keen games proved the PC could run scrolling 2D platformers properly. You could port over Apple II games such as Prince of Persia, and they wouldn’t be a hideous, four-color mess.

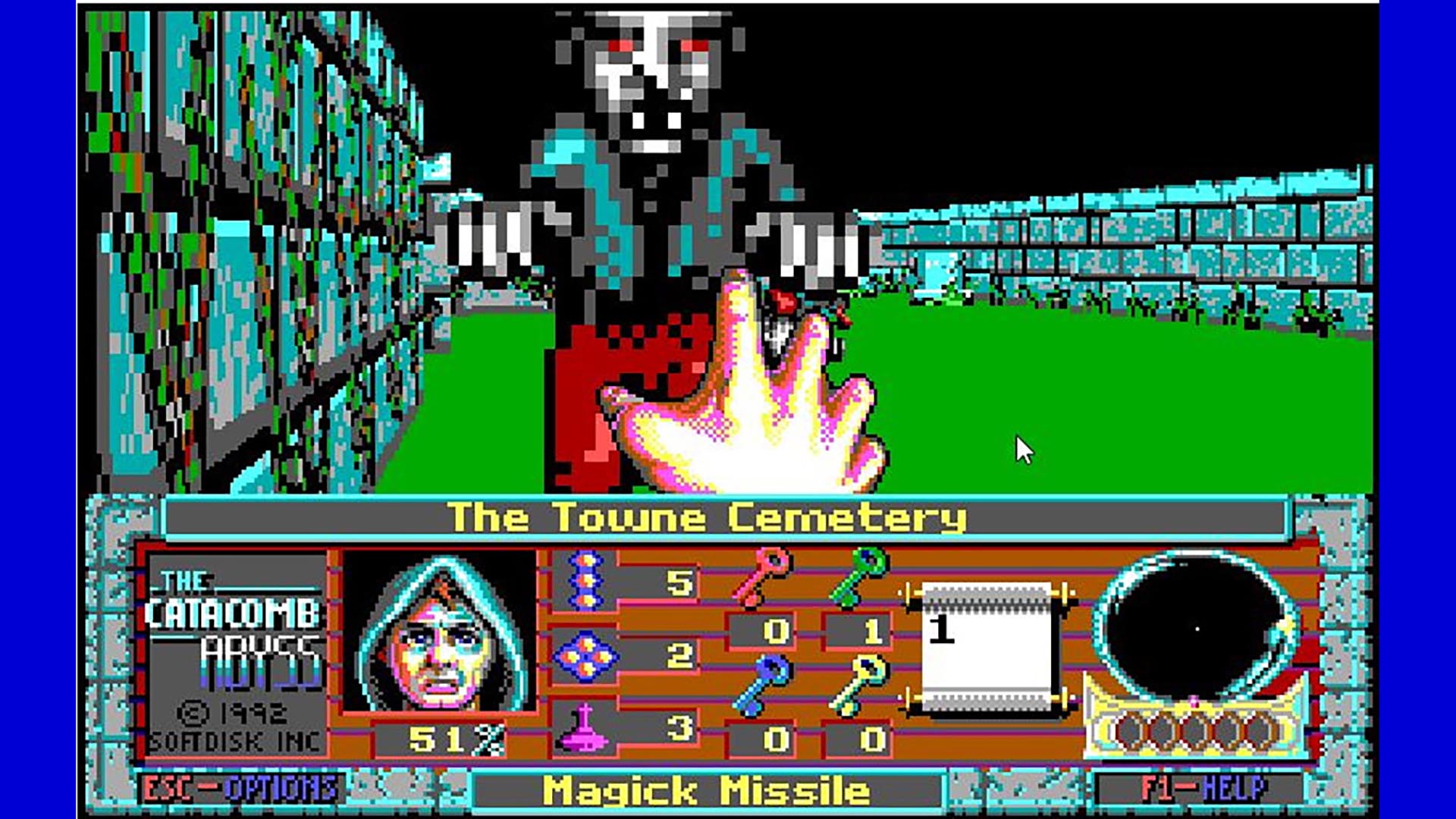

And when the coder behind Commander Keen – a certain John Carmack – started work on a new 3D sequel to the Catacomb series of dungeon crawlers, he created something genuinely transformative. Catacomb 3-D and Catacomb: Abyss gave Carmack his first crack at a texture-mapped 3D engine, and arguably started the FPS genre.

Sure, EGA had its limitations – looking back, there’s an awful lot of green and purple – but with care and creativity, an artist could do a lot with 16 colors and begin creating more immersive game worlds.

Forgive the blocky pixels and 16-color palette – Catacomb 3-D and Catacomb: Abyss sowed the seeds of Wolfenstein and Doom

The decline of EGA graphics

EGA’s time at the top of the graphics tech tree was short. Home computers kept evolving, and in 1985, Commodore launched the Amiga, supporting 64 colors in games and up to 4,096 in its special HAM mode. Even as it launched EGA, IBM was talking about a new, high-end board, the IBM Professional Graphics Controller (PGC), which could run screens at 640 x 480 with 256 colors from a total of 4,096.

PGC was priced high and aimed at the professional CAD market, but it helped to pave the way for the later VGA graphics standard, introduced with the IBM PS/2 in 1987. VGA supported the same maximum resolution and up to 256 colors at 320 x 200. This turned out to be exactly what was needed for a new generation of operating systems, applications and PC games.

What extended EGA’s lifespan was the fact that VGA remained expensive until the early 1990s, while EGA had developed a reasonable install base. Even once VGA hit the mainstream, many games remained playable in a slightly gruesome 16-color EGA mode. Much like the 286 processor and the Ad-Lib sound card, EGA came before the golden age of PC gaming, but this standard paved the way for the good stuff that came next.

We hope you’ve enjoyed this retrospective about EGA. If you’re looking to upgrade to the latest Nvidia or AMD GPU, which can display much more than 16 colors, make sure you read our full guide to the best graphics card, which covers the best options at a range of prices. One of our current favorites from the firm that brought us the first PC gaming GPU is the Nvidia GeForce RTX 4070.

For more vintage PC and DOS gaming content, check out our full feature on the very first PC – the IBM PC 5150, as well as our Retro Tech page, and our full guide on how to build a retro gaming PC.