Just how do you follow up a graphics card as beloved, ground-breaking, and just plain damn awesome as the original 3dfx Voodoo Graphics? There was, after all, no way to repeat that gargantuan leap from the grainy, low-resolution horror of CPU-bound 3D rendering to the smoothness of hardware-accelerated rendering again, but the 3dfx Voodoo 2 still managed to maintain that momentum.

Not only did the Voodoo 2 give you the ability to upgrade your 3D gaming resolution from 640 x 480 to 800 x 600, but if you paired two of them together with a ribbon cable in the new SLI mode, you could even play games at 1,024 x 768 (cue sarcastic jaw drop). If you played games, the 3dfx Voodoo 2 was undoubtedly the best graphics card of its day.

While it’s difficult to think of this as high resolution in an age where people are sincerely discussing gaming at 7,680 x 4,320, back in 1998 most people were using 14-15-inch CRT screens, some of which couldn’t even go above 800 x 600 in non-interlaced mode. The idea that you could actually run 3D-accelerated games at 1,024 x 768 (786,432 pixels), when the first 3dfx Voodoo cards could only run at 640 x 480 (307,200 pixels), seemed astonishing.

We’ll come to SLI in a minute, though, as the Voodoo 2 was already taking advantage of parallel processing on its own, without the need for a second card.

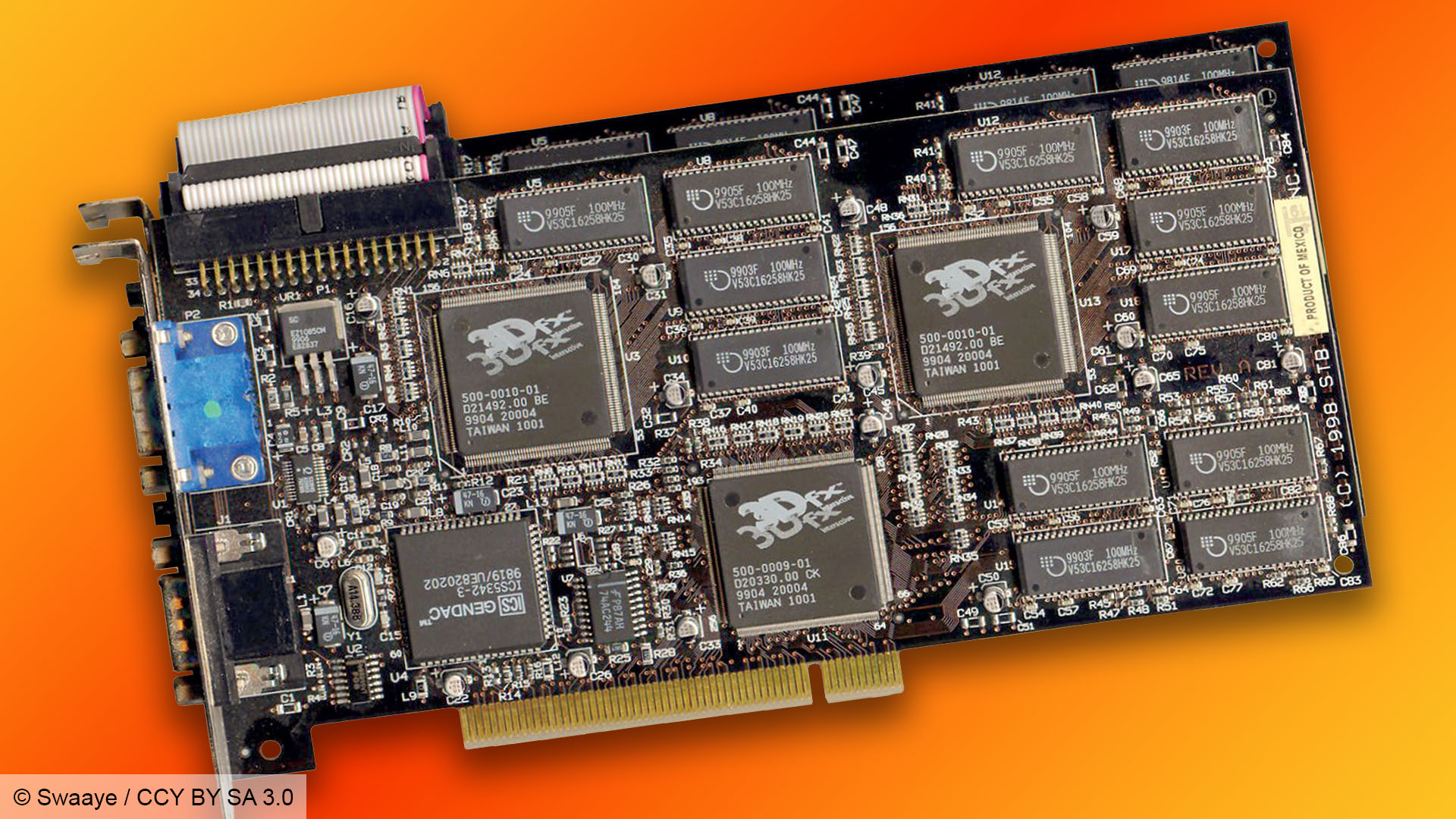

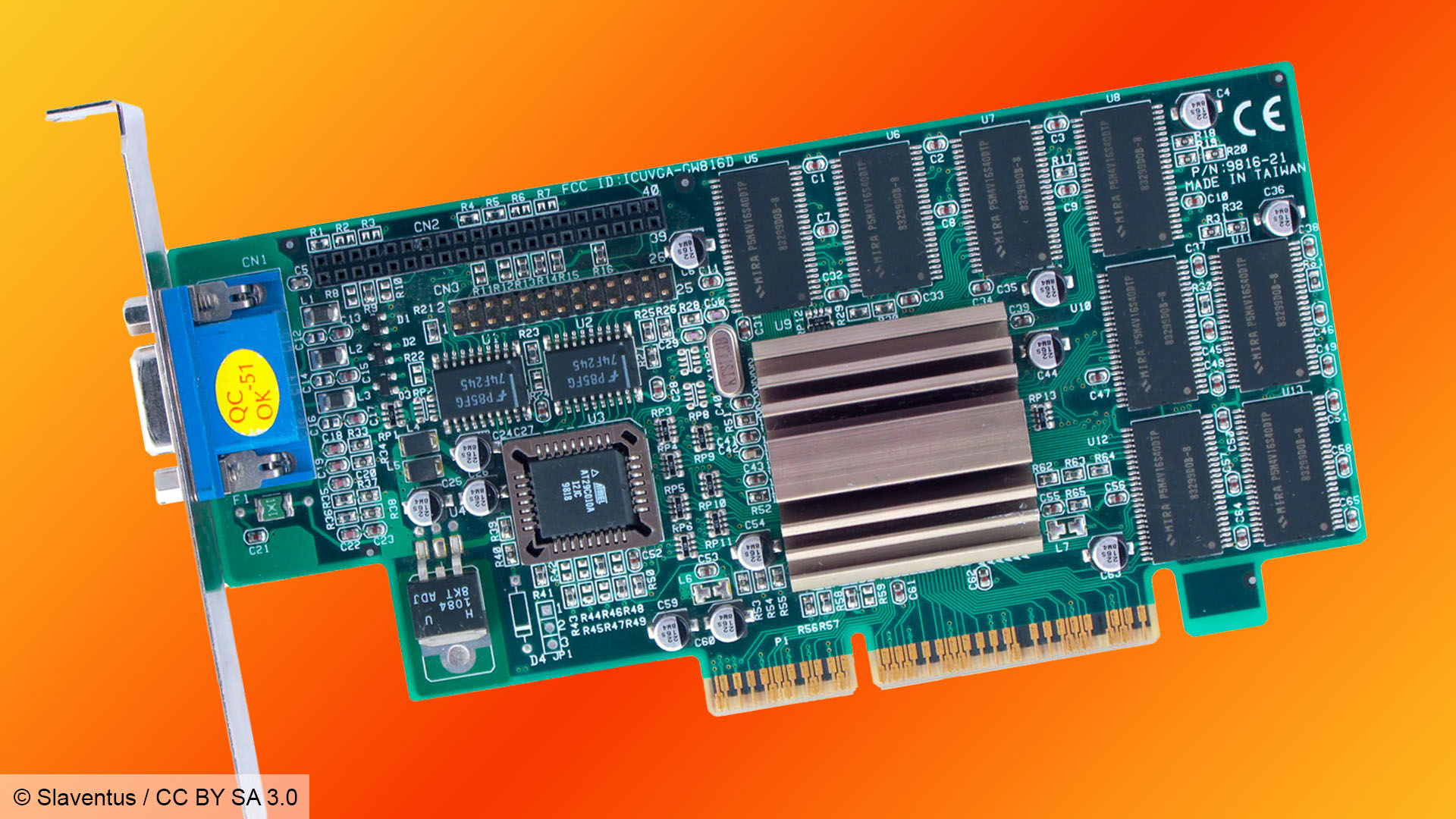

Part of the reason why I loved the Voodoo 2 so much at the time isn’t just because it was immensely powerful, but also because the cards themselves really looked like they were packed to the rafters with silicon. Like early sound cards, the PCBs were covered in chips.

There was either 8MB or 12MB of EDO memory, made up of 256KB chips, often on either side of the circuitboard, and there were also three big chips with fancy 3dfx logos on them, which is where the magic happened. Why three? Well, the Voodoo 2 took the idea of the original two-chip Voodoo Graphics chipset and parallelized some of the work.

Let’s step back and look at that first Voodoo Graphics chipset, called SST1 built by TSMC on a 500nm process. It had two main chips – a frame buffer interface (FBI) and a texture mapping unit (TMU), each of which were usually allocated 2MB of memory, giving you 4MB in total, although some cards gave you more memory.

The job of the FBI was to take the polygon data from your CPU, and do the basic pre-texturing work – Z-buffering, Gouraud shading, tracking the polygons, and filling the visible ones with basic shading.

Each frame would then be split into scan lines and sent to the TMU (or T-Rex as 3dfx called it), which would apply perspective-correct textures, including mipmapping (using smaller, less-detailed textures as an object becomes more distant) and bilinear or trilinear filtering (smoothing out blocky textures when displayed at their largest size close to the viewpoint).

3dfx Voodoo 2 chips

The Voodoo 2 then took this same approach and ran with it. For starters, it accelerated a bit more of the 3D graphics pipeline in hardware, taking the triangle setup process away from the CPU.

Secondly, the architecture officially had the ability to scale with more texture units being added. The original Voodoo Graphics standard also had this ability to scale with extra TMUs (it’s detailed in the whitepapers from the time), but it was never used in the gaming cards available in the shops.

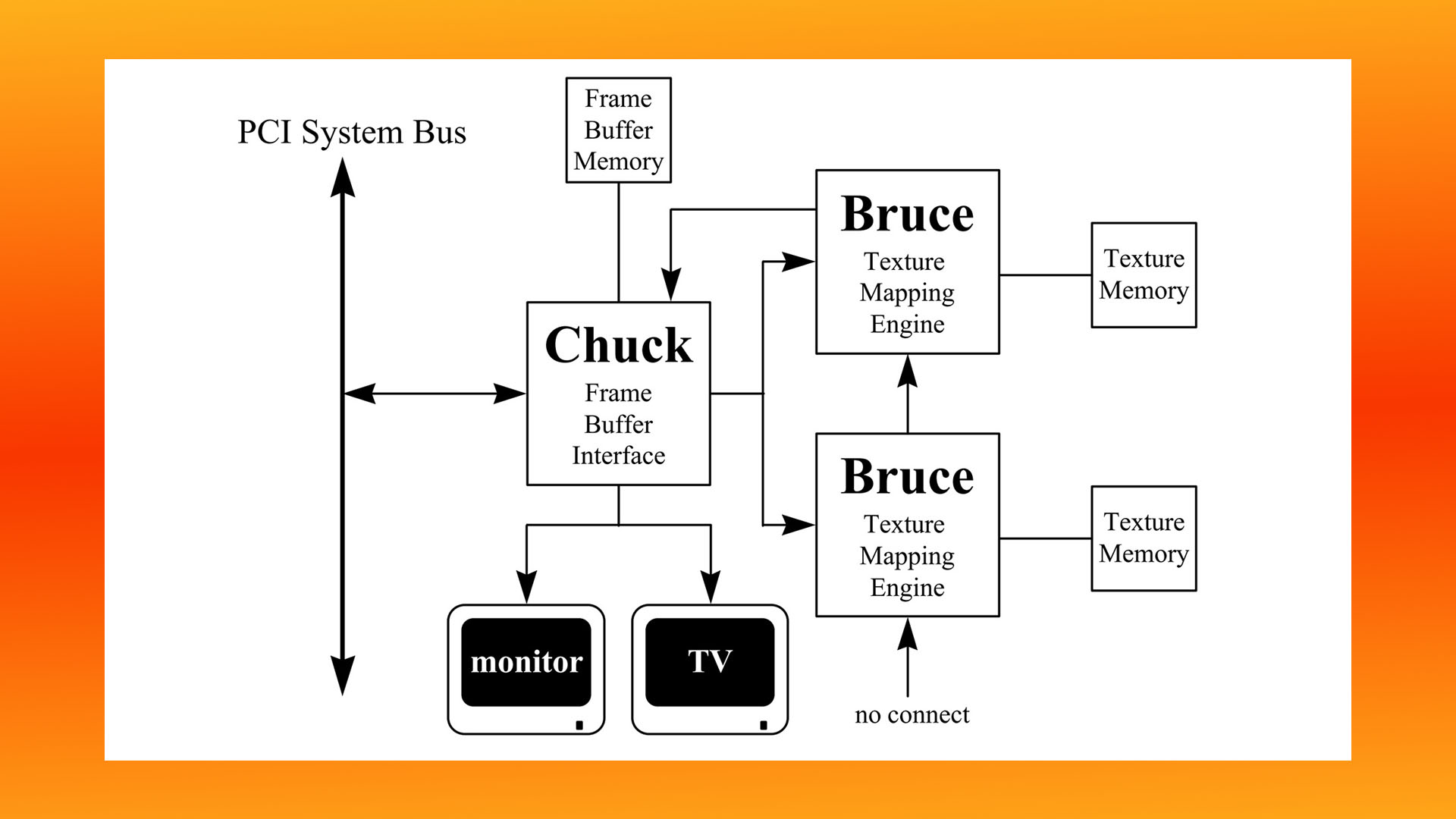

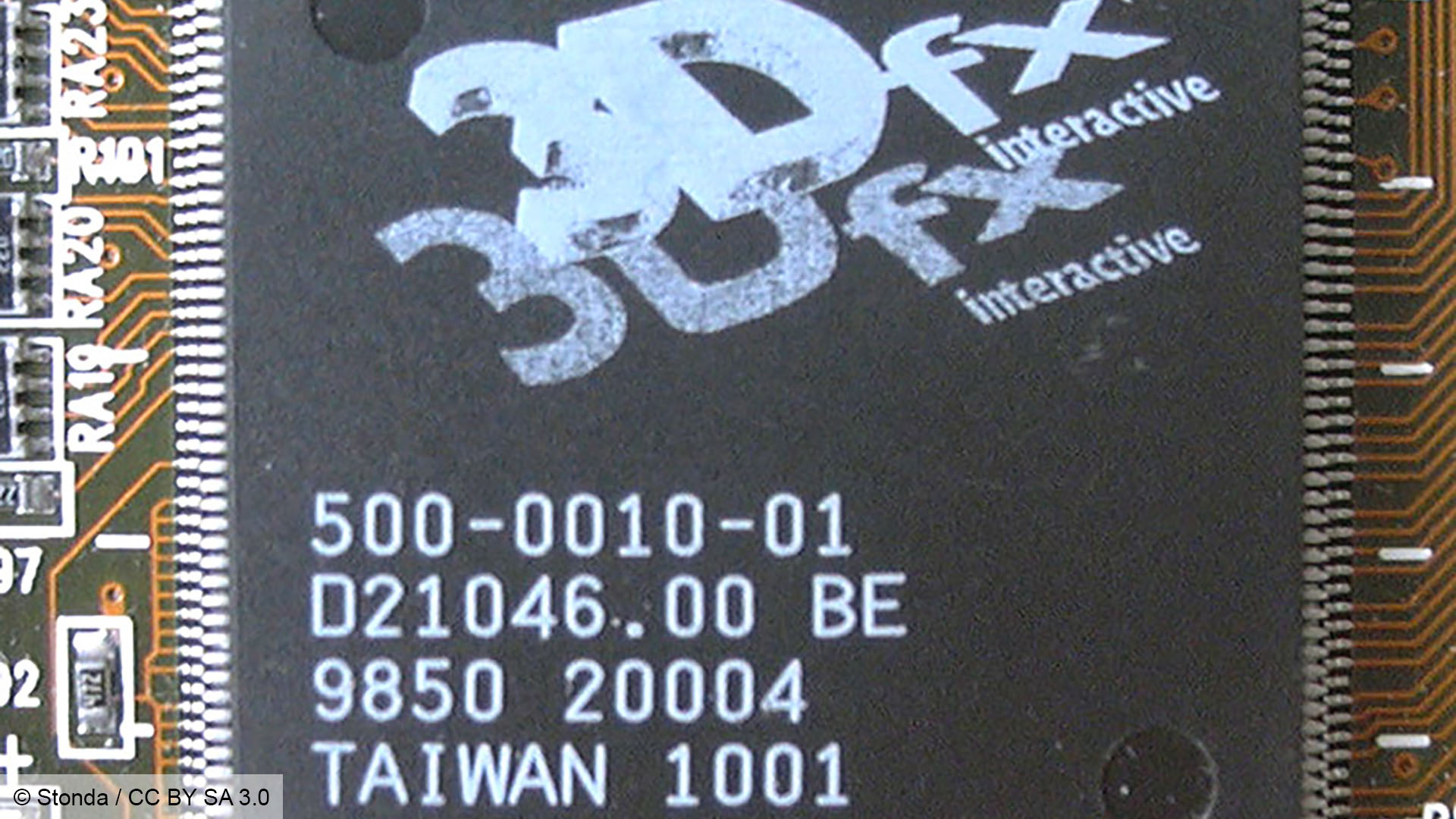

Like SST1, the new SST2 chipset (fabricated on a 350nm process by TSMC) also contained an FBI chip codenamed Chuck (denoted by ‘CK’ on the chip) and a texture unit codenamed Bruce (denoted by ‘BE’ on the chip).

A standard Voodoo 2 card would sport one Chuck chip, which did all the communication with the CPU, performed the aforementioned triangle setup, and also applied Gouraud shading, alpha blending, fogging, depth-buffering and dithering. Chuck also had its own 2-4MB allocated chunk of 90MHz EDO memory, addressed through a 64-bit interface, and handled display controller duties as well.

Two Bruce chips would then be hooked up to Chuck, and as with the T-Rex chip on the original Voodoo Graphics chipset, these would map the textures to the correct perspective, and process level-of-detail (LOD) mipmapping, as well as applying bilinear or trilinear filtering.

By having two of these chips on one card, you could effectively perform double the texturing work in a single pass, as long as the game supported more than one texture layer. Both Unreal and Quake II supported dual texturing per pixel in a single pipeline pass, for example, resulting in gains from the Voodoo 2 compared with the original Voodoo Graphics cards.

If the game didn’t support dual texturing then you’d still get a decent uplift from the clock speed increase, going from 50MHz on the first Voodoo to 90MHz on the Voodoo 2, but the difference wasn’t so pronounced. Having that extra chip on hand also effectively gave you the ability to enable other features that would otherwise take too much of a performance hit due to the number of passes required.

A prime example is trilinear filtering, which could now be performed in one pass rather than two. There was also then more headroom for simulated bump mapping, reflection maps, shadow maps, more detailed textures and lighting maps. Some games really took advantage of this, such as the 1998 game Battlezone, for which you could download a Voodoo 2 patch that gave you some graphical enhanced effects, including more detailed explosions.

As with Chuck, each Bruce chip had a 64-bit memory interface and its own allocation of EDO memory. The minimum spec was 2MB per Bruce chip, which would give you an 8MB card with a 4MB frame buffer. There were also cards with more memory – 12MB cards, where each chip had 4MB allocated to it, were a very common option.

3dfx Voodoo 2 SLI

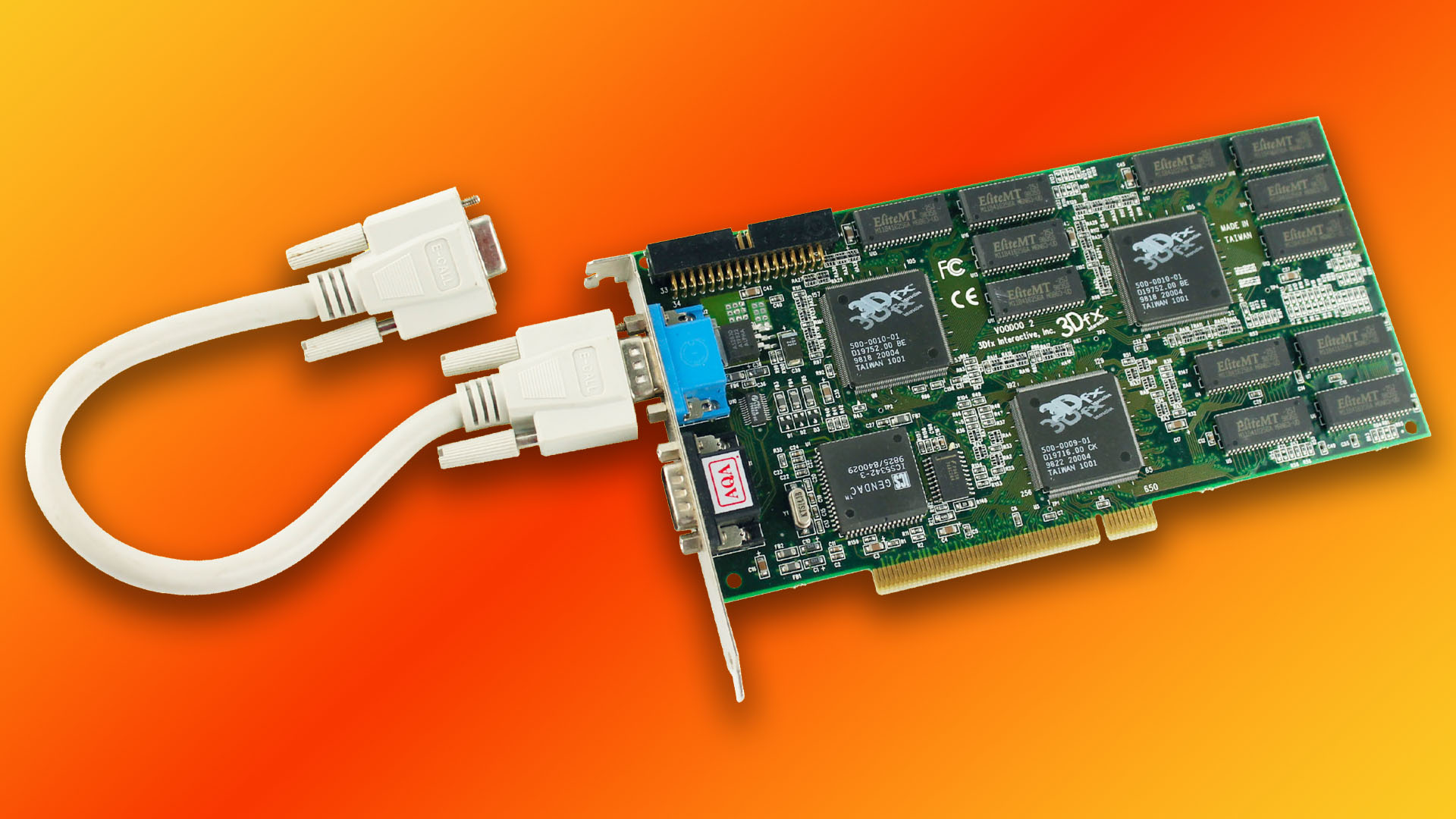

If that extra performance boost wasn’t enough for you, then you could even go one step further, and use the little grey ribbon cable that came in every Voodoo 2 card’s box. This cable enabled you to chain two Voodoo 2 cards together using the pin headers at the tops of the cards.

The two cards could then share synchronization information with each other over the cable, giving you the headroom needed to run games at 1,024 x 768, and enabling even more work to be done in one pass, as you now had four Bruce chips working together.

Although SLI is the same initialism used by Nvidia, which bought out 3dfx and its assets in December 2000, for its (now largely defunct) multi-GPU gaming technology, the original 3dfx implementation worked quite differently. For Nvidia, it stood for ‘scalable link interface’, where cards worked together either on alternate frames or in a split screen mode.

For 3dfx, it stood for ‘scan line interleave’, and it took advantage of the part of 3dfx’s pipeline in between the Chuck and Bruce chips. Chuck would do the job of splitting each frame into scan lines (horizontal chunks of pixels from the left edge of the scene to the right), and then the Voodoo 2’s pair of Bruce chips would get to work on the texturing work. With two cards, however, they could work in tandem – with one card texturing the even-numbered scan lines, and one card texturing the odd-numbered ones.

Again, the benefit here wasn’t just the performance boost, but the ability to process even more work in a single pass. For example, a single Voodoo 2 could do trilinear filtering with mipmapping in a single pass, but would require two passes if you then added detail textures to the mix. Adding a second card meant this could all be done in one pass.

SLI was a good bragging rights technology, but it was only used by a select niche of gamers, and not only because buying two cards was expensive. SLI was notoriously fussy when it came to cards working together, and the only safe way to ensure compatibility was to buy two identical cards from the same manufacturer at the same time.

This cut down your options for upgrading. If you bought a single Voodoo 2 card at first, upgrading to SLI later would require you to look for an identical card. There were some exceptions, where cards from different manufacturers would cooperate with each other, but there was risk involved.

Plus, as we mentioned earlier, not every game supported multi-texturing in this way, which meant your two expensive cards were sometimes no faster than a single one. What’s more, having two cards put a lot of extra work on the CPU, which still handled a fair amount of the 3D graphics pipeline at this time, including transform and lighting.

Previously, you only needed a 1st-gen Pentium CPU to get a 3dfx Voodoo Graphics card working well at 640 x 480 in most games, and officially, the Voodoo 2 had the same requirements. However, SLI upped the pressure on the CPU. In 3dfx’s own tests, a 200MHz Pentium would run Quake 2 at 36fps in SLI mode, but this went all the way up to 67fps if you had a 300MHz Pentium II – you really needed to remove the CPU bottleneck if you wanted to run two Voodoo 2 cards.

And there were more problems still, even if you bought two identical cards. For example, Creative’s website detailed a problem using two Creative cards with a 75Hz monitor refresh rate using the bundled cable. Its advice was to either use a single cable or run your monitor at 60Hz instead.

The Voodoo 2 was also still only a 3D accelerator, meaning you also needed a dedicated 2D graphics card, which you’d connect to the Voodoo 2 with a VGA loop-back cable round the back. You still only needed one loop-back cable for SLI, but once you had two Voodoo 2 cards, you were then using three of your PCI slots for graphics alone.

That’s a problem when most motherboards had few integrated components, and people often had at least a PCI modem and sound card as well. Not only did an SLI system mean potentially running out of PCI slots, but every expansion card also needed its own system resources, and resolving IRQ conflicts with SLI systems was a right pain.

3dfx Banshee

3dfx did have an answer to the multiple card problem, with a Voodoo 2-based card that could handle both 2D and 3D duties, much like the previous Voodoo Rush cards had done with the first Voodoo Graphics, but with just one main chip. Called the Banshee (denoted by ‘BAN’ on the chip), it was available with the new Accelerated Graphics Port (AGP) interface, which had started appearing on motherboards.

The Banshee effectively combined the resources of a Chuck chip and a single Bruce chip in one piece of silicon. It also upped the chip clock speed slightly to 100MHz, and used 100MHz SDRAM, rather than the 90MHz EDO memory on the standard Voodoo 2 cards.

The lack of a second texture unit meant the Banshee was significantly slower than the Voodoo 2 in games that supported dual texturing, but its small clock speed advantage meant the Banshee was very slightly quicker in other games.

However, concentrating all that processing power (4 million 350nm transistors) into a single 137mm² chip made the Banshee a toasty customer. It came with a heatsink on the chip as standard (some Voodoo 2 cards also had heatsinks on their chips), but that small heatsink still became seriously hot to the touch – it was well worth jerry-rigging a small fan to it if you wanted to keep it in check.

With largely disappointing performance, as well as thermal issues, the Banshee struggled to compete with other all-in-one cards, such as the Nvidia Riva TNT, as well as the ATi Xpert cards, but it did lay the foundation for the single-chip Voodoo3 that appeared later in 1999.

The last dedicated 3D card

The Voodoo 2 was a success for 3dfx, with the ability to run games at 800 x 600, and its improved performance, making it highly popular with gamers. At this time, going down the 3dfx route was the only way to ensure compatibility with the company’s GLide API, which was required by some games, and also sometimes looked better than Direct3D and OpenGL at the time – you could only get reflective floor surfaces in Unreal using GLide, for example.

But the Voodoo 2 was the last of its era – the competition was busy making 2D/3D combo cards, such as Nvidia’s Riva TNT, and 3dfx later fully joined the club with its Voodoo3. The age of the dedicated 3D gaming accelerator was over, but the Voodoo 2 laid a large part of the foundation for parallelizing the 3D graphics pipeline with multiple processors.

Its last gasp came when 3dfx pulled all of its card manufacturing in house, and rebranded it as the V2-1000, a budget 3D card for people who couldn’t afford the Voodoo3, and later Voodoo4 and Voodoo5 cards. The final Voodoo 2 whitepaper from 1999 even details a version that used SDRAM or SGRAM, rather than EDO memory, and could feature up to three Bruce chips on a single card – a setup that never saw the light of day.

We hope you’ve enjoyed reminiscing with us through this personal retrospective about the last 3dfx dedicated PCI 3D accelerator. For more articles about the PC’s vintage history, check out our retro tech page, and make sure you read our full guide on how to build a retro gaming PC, which will take a Voodoo 2 in one of its PCI slots.